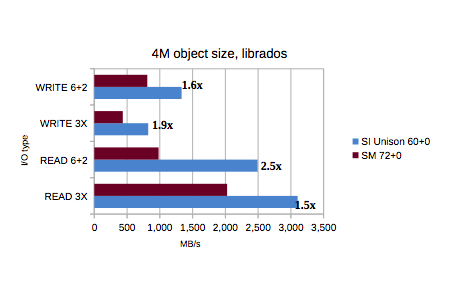

Unison Ceph beats reference architecture, including the flavor with NVMe drives

By joe

- 2 minutes read - 348 wordsThe paper is here. We focused on our product mix and the rough comparables in the report. Our units are immediately available as well, preloaded/preconfigured with Ceph. The takeaway is this:

[

](https://scalableinformatics.com/assets/documents/Unison-Ceph-Performance.pdf)

Whats really interesting in this is that the 36+2 reference architecture makes use of 2x NVMe drives. And as you can see, they really don’t help much in the tests. This is not to say NVMe is bad; its not. It is to say that overall architecture matters. You can’t build fast/efficient systems out of a box of software and random collections of hardware. You really do need to pay attention to design to get the best performance. You also can’t just drop a silver bullet plugin card/disk into a unit, and hope it does better. It won’t. I should also point out that we used several generation older drives and SSDs (where we used SSDs) in these tests, while the reference architecture was kitted out with the latest and greatest. This is important, as an architecture that can’t drive systems efficiently won’t make effective use of its resournces, while an architecture that can use its components effectively will do often far better. We saw this 2 decades ago in the R8000 vs Dec Alpha days. There was no possibility that the R8000 could beat the Alpha on computational code … except .. that .. it did. Architecture matters. Far more than other aspects. If your architecture can’t move the data, can’t process the data for storage, can’t write it out quickly, then chances are, you really shouldn’t be using it. Massive data storage requires very well architected systems, that can move data within and without. This excellent performance coupled with the tremendous density (and therefore capacity) we bring to the table

[

](https://scalableinformatics.com/unison)

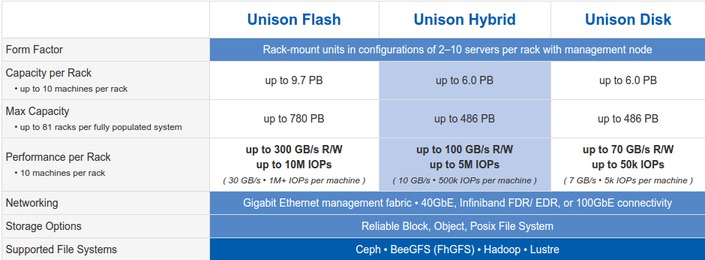

means you can store your data, and work with it. This means you need to buy less kit to achieve your performance and capacity goals. Which reduces TCO and many other metrics. As we like to say, with us, you’ll spend less and do more. With others, the other way around.